Intel 4000 does it support shader model 3.0

- INTEL 4000 DOES IT SUPPORT SHADER MODEL 3.0 UPDATE

- INTEL 4000 DOES IT SUPPORT SHADER MODEL 3.0 DRIVER

- INTEL 4000 DOES IT SUPPORT SHADER MODEL 3.0 WINDOWS 10

- INTEL 4000 DOES IT SUPPORT SHADER MODEL 3.0 PROFESSIONAL

Yes, Media Accelerator 950 supports Shader 3.0 model. It is also possible that this technology is associated with the description of this patent. Does the Intel Graphics Media Accelerator 950 support shader model 3.0 Wiki User.

INTEL 4000 DOES IT SUPPORT SHADER MODEL 3.0 WINDOWS 10

(According to observations at the current time before the release of Windows 10 version 2004, the option requires hardware support for the Shader Model not lower than version 6.3, which can be found through AIDA64, but not GPU-Z, as it displays not reliable information) It is supported by Nvidia Geforce video cards starting from the 10th series, as well as integrated graphics from Intel HD 500 or later in both cases, but AMD Radeon is not supported yet due to the lack of insider drivers. Karthik Vaidyanathan (KV), Intel’s Principal Engineer for XeSS technology has been interviewed by Usman Pirzada from Wccftech. It works regardless of the API used for games and applications such as DirectX/Vulkan/OpenGL. Hardware-accelerated GPU scheduling: It allows the video card to directly manage its video memory, which in turn significantly improves the performance of the minimum and average FPS, and thereby reducing latency.

INTEL 4000 DOES IT SUPPORT SHADER MODEL 3.0 DRIVER

This includes comments like "mUh gAeMiNg kInG" Related Subreddits:Īvailable in Windows 10 Insider with Nvidia Driver 450.12 and Intel 27.20.100.7859 only, in insider builds starting from 1.84. Intel - AW8063801108900 - Intel Intel Core i7 i7-3540M Dual-core (2 Core) 3 GHz Processor - Socket G2OEM Pack - 512 KB - 4 MB Cache - 5 GT/s DMI - Yes - 22 nm - 3 Number of Monitors Supported - Intel HD Graphics 4000 Graphics - 35 W - 221 F (105 C) 128.02.

INTEL 4000 DOES IT SUPPORT SHADER MODEL 3.0 PROFESSIONAL

Please visit /r/AyyMD, or it's Intel counterpart - /r/Intelmao - for memes. The Worlds Most Powerful Single Slot Professional Graphics Card Designers and engineers can create models with larger assemblies and larger numbers of. Rule 5: AyyMD-style content & memes are not allowed. AMD recommendations are allowed in other threads. Commenting on a build pic saying they should have gone AMD is also inappropriate. i5-12600k vs i5-12400?) recommendations, do not reply with non-Intel recommendations. Rule #4: Give competitors' recommendations only where appropriate. No religion/politics unless it is directly related to Intel Corporation Rule 3: All posts must be related to Intel or Intel products. Rule 2: No Unoriginal Sources, Referral links or Paywalled Articles. If you can't say something respectfully, don't say it at all. This includes comments such as "retard", "shill", "moron" and so on.

Uncivil language, slurs, and insults will result in a ban.

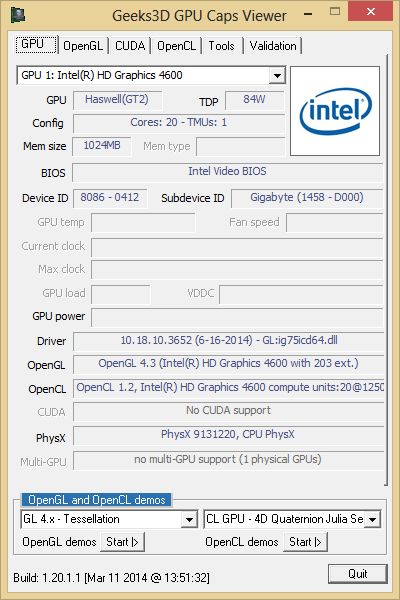

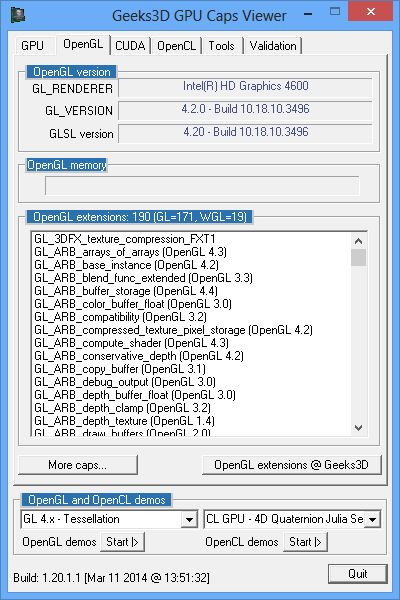

It features 128 shading units, 16 texture mapping units, and 2 ROPs. Built on the 22 nm process, and based on the Ivy Bridge GT2 graphics processor, the device supports DirectX 11.1. But of course, thats a new machine otherwise entirely, so its expensive.Įither way, yes if OP has room for a GPU in the case then get a GPU first, lol.Subreddit and discord for Intel related news and discussions. 1280x720 1366x768 The HD Graphics 4000 was a mobile integrated graphics solution by Intel, launched on May 14th, 2012. And GW2 doesnt exactly use those threads to begin with. I went from an 3570K to an 8700K with the same GTX1060 and it is noticably faster in WvW zerging where the older CPU croaked. 3 fps or something.Ī newer cpu is a considerably leap in itself too. Your 2000%+ only apply in specific games his machine get like. The author of this thread has indicated that this post answers the original topic. This helped my friend after everything we tried. Then go to Nvidia Control panel (depends if you have nvidia) and make sure to select GeForce card in the PhysX tab.

INTEL 4000 DOES IT SUPPORT SHADER MODEL 3.0 UPDATE

Notebookcheck actually got GW2 benchmarks on old HD4000 (who knew lol) and a tested GTX1080 laptop is only 1000% faster at ultra settings and thats about twice as fast as a GTX1050 I'd wager. Make sure to download the latest update from the official website. Example would be the GTX 1050, which can be had for around 100-120 bucks, is around 2,000-3,000% faster than the GPU on the i5 you have.Actually the igpu of the the 8th gen compared to 3rd gen would be around twice as fast, which is a considerable leap.

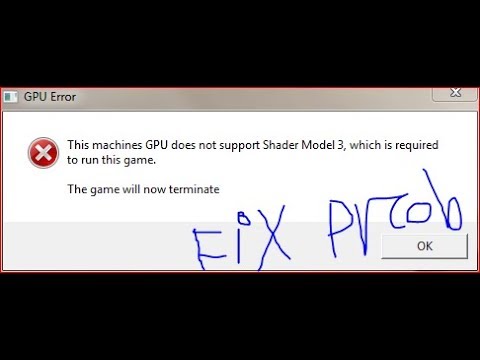

The NV40 graphics processor is an average sized chip with a die area of 287 mm² and 222 million transistors. Graphics: 128 MB VRAM DirectX 9.0c-compliant, Shader 3.0-enabled video card. Since Quadro FX 4000 does not support DirectX 11 or DirectX 12, it might not be able to run all the latest games. You also don't need some crazy GPU, a simple mid or even lower mid range card will be miles ahead of the one on the i5. Additional: Tom Clancys Rainbow Six is not fully compatible with Windows 7. GPU/CPU also has nothing to do with ping as that is network related and is a problem to it self. If you are not sure what Pixel Shader level your video card can support, there is a chance that your video card will not be capable of running a game that. Said:The on board GPU from the newer i5 is not going to be a large gain, and you would be looking at new motherboard, RAM and CPU, when for less than that you can just get a REAL GPU and see much, much larger gains in FPS.